Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Independent vs. Dependent Variables | Definition & Examples

Independent vs. Dependent Variables | Definition & Examples

Published on February 3, 2022 by Pritha Bhandari . Revised on June 22, 2023.

In research, variables are any characteristics that can take on different values, such as height, age, temperature, or test scores.

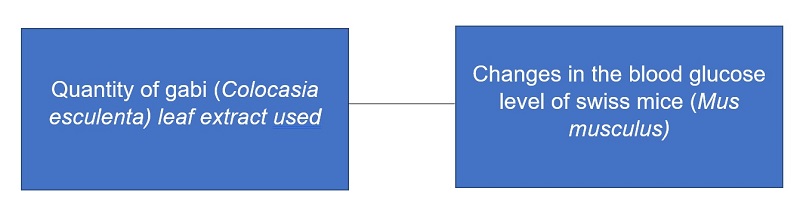

Researchers often manipulate or measure independent and dependent variables in studies to test cause-and-effect relationships.

- The independent variable is the cause. Its value is independent of other variables in your study.

- The dependent variable is the effect. Its value depends on changes in the independent variable.

Your independent variable is the temperature of the room. You vary the room temperature by making it cooler for half the participants, and warmer for the other half.

Table of contents

What is an independent variable, types of independent variables, what is a dependent variable, identifying independent vs. dependent variables, independent and dependent variables in research, visualizing independent and dependent variables, other interesting articles, frequently asked questions about independent and dependent variables.

An independent variable is the variable you manipulate or vary in an experimental study to explore its effects. It’s called “independent” because it’s not influenced by any other variables in the study.

Independent variables are also called:

- Explanatory variables (they explain an event or outcome)

- Predictor variables (they can be used to predict the value of a dependent variable)

- Right-hand-side variables (they appear on the right-hand side of a regression equation).

These terms are especially used in statistics , where you estimate the extent to which an independent variable change can explain or predict changes in the dependent variable.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

There are two main types of independent variables.

- Experimental independent variables can be directly manipulated by researchers.

- Subject variables cannot be manipulated by researchers, but they can be used to group research subjects categorically.

Experimental variables

In experiments, you manipulate independent variables directly to see how they affect your dependent variable. The independent variable is usually applied at different levels to see how the outcomes differ.

You can apply just two levels in order to find out if an independent variable has an effect at all.

You can also apply multiple levels to find out how the independent variable affects the dependent variable.

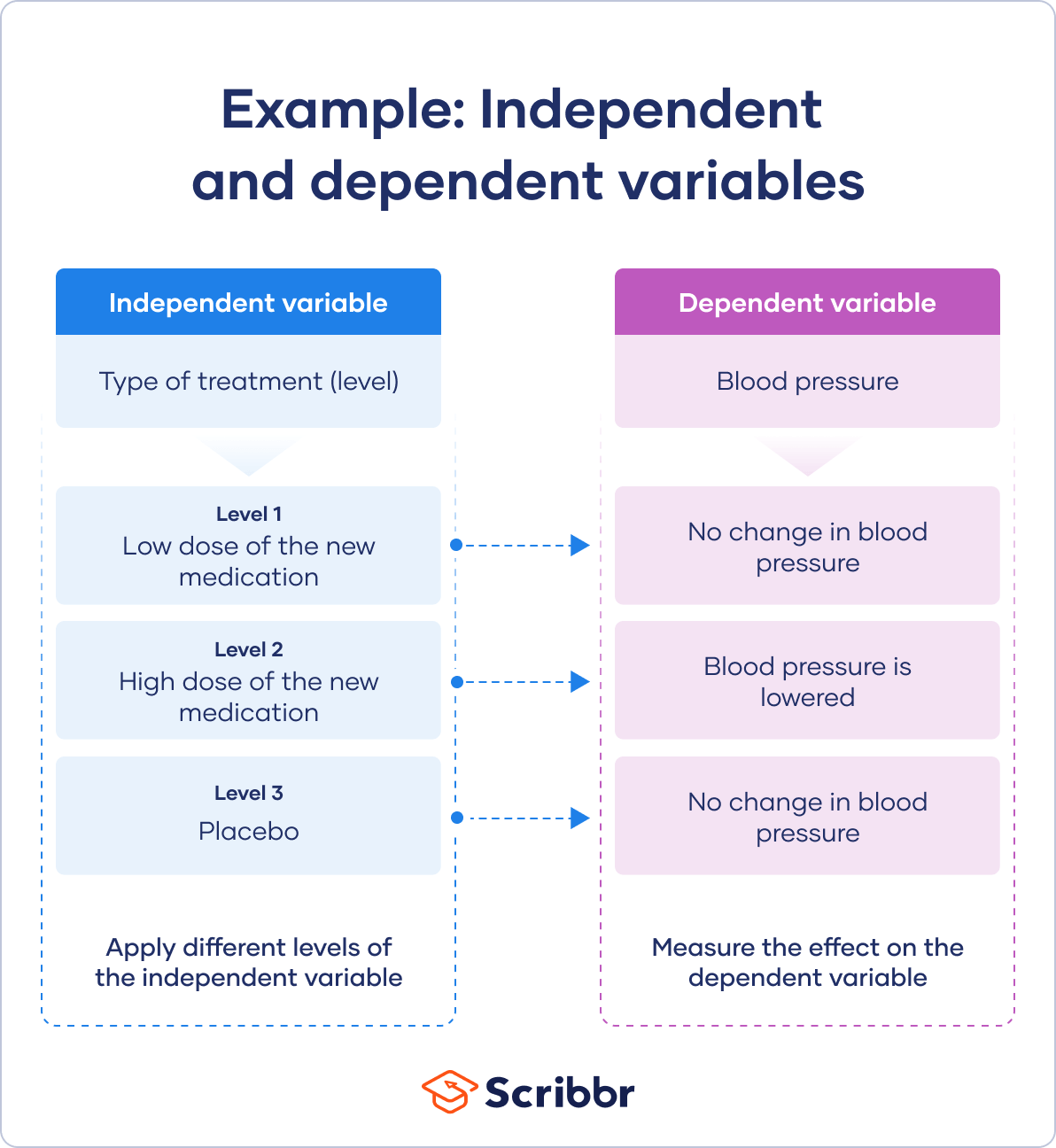

You have three independent variable levels, and each group gets a different level of treatment.

You randomly assign your patients to one of the three groups:

- A low-dose experimental group

- A high-dose experimental group

- A placebo group (to research a possible placebo effect )

A true experiment requires you to randomly assign different levels of an independent variable to your participants.

Random assignment helps you control participant characteristics, so that they don’t affect your experimental results. This helps you to have confidence that your dependent variable results come solely from the independent variable manipulation.

Subject variables

Subject variables are characteristics that vary across participants, and they can’t be manipulated by researchers. For example, gender identity, ethnicity, race, income, and education are all important subject variables that social researchers treat as independent variables.

It’s not possible to randomly assign these to participants, since these are characteristics of already existing groups. Instead, you can create a research design where you compare the outcomes of groups of participants with characteristics. This is a quasi-experimental design because there’s no random assignment. Note that any research methods that use non-random assignment are at risk for research biases like selection bias and sampling bias .

Your independent variable is a subject variable, namely the gender identity of the participants. You have three groups: men, women and other.

Your dependent variable is the brain activity response to hearing infant cries. You record brain activity with fMRI scans when participants hear infant cries without their awareness.

A dependent variable is the variable that changes as a result of the independent variable manipulation. It’s the outcome you’re interested in measuring, and it “depends” on your independent variable.

In statistics , dependent variables are also called:

- Response variables (they respond to a change in another variable)

- Outcome variables (they represent the outcome you want to measure)

- Left-hand-side variables (they appear on the left-hand side of a regression equation)

The dependent variable is what you record after you’ve manipulated the independent variable. You use this measurement data to check whether and to what extent your independent variable influences the dependent variable by conducting statistical analyses.

Based on your findings, you can estimate the degree to which your independent variable variation drives changes in your dependent variable. You can also predict how much your dependent variable will change as a result of variation in the independent variable.

Distinguishing between independent and dependent variables can be tricky when designing a complex study or reading an academic research paper .

A dependent variable from one study can be the independent variable in another study, so it’s important to pay attention to research design .

Here are some tips for identifying each variable type.

Recognizing independent variables

Use this list of questions to check whether you’re dealing with an independent variable:

- Is the variable manipulated, controlled, or used as a subject grouping method by the researcher?

- Does this variable come before the other variable in time?

- Is the researcher trying to understand whether or how this variable affects another variable?

Recognizing dependent variables

Check whether you’re dealing with a dependent variable:

- Is this variable measured as an outcome of the study?

- Is this variable dependent on another variable in the study?

- Does this variable get measured only after other variables are altered?

Here's why students love Scribbr's proofreading services

Discover proofreading & editing

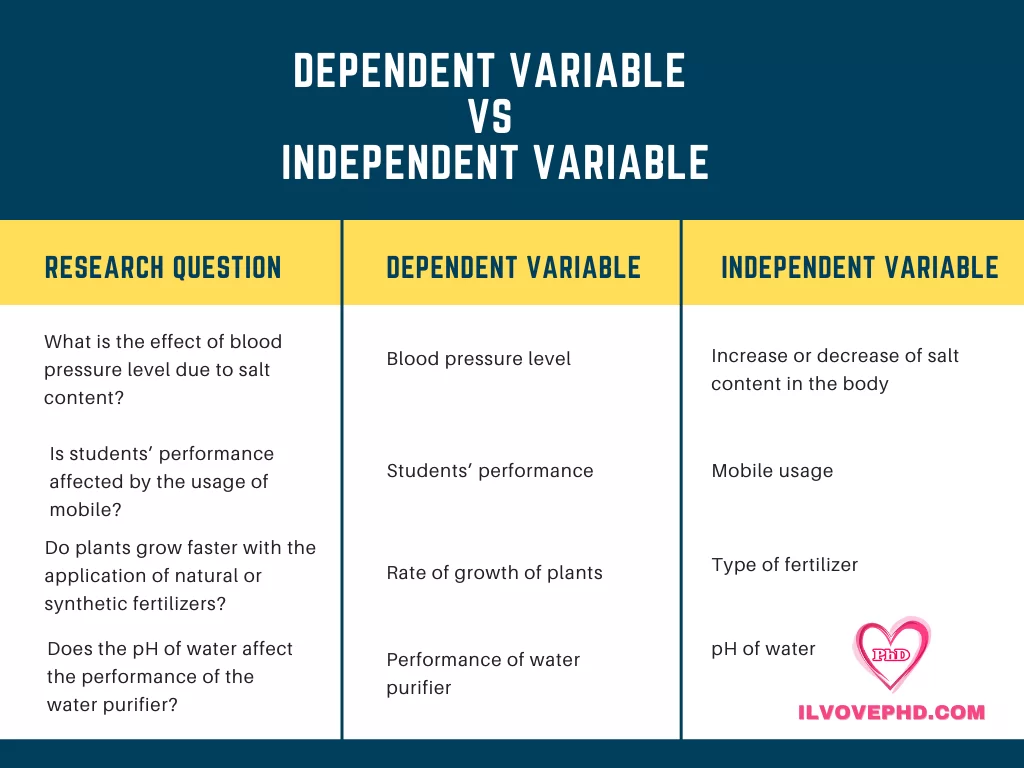

Independent and dependent variables are generally used in experimental and quasi-experimental research.

Here are some examples of research questions and corresponding independent and dependent variables.

| Research question | Independent variable | Dependent variable(s) |

|---|---|---|

| Do tomatoes grow fastest under fluorescent, incandescent, or natural light? | ||

| What is the effect of intermittent fasting on blood sugar levels? | ||

| Is medical marijuana effective for pain reduction in people with chronic pain? | ||

| To what extent does remote working increase job satisfaction? |

For experimental data, you analyze your results by generating descriptive statistics and visualizing your findings. Then, you select an appropriate statistical test to test your hypothesis .

The type of test is determined by:

- your variable types

- level of measurement

- number of independent variable levels.

You’ll often use t tests or ANOVAs to analyze your data and answer your research questions.

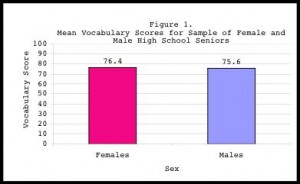

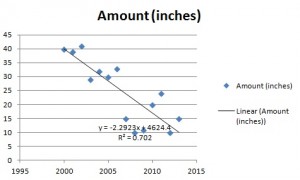

In quantitative research , it’s good practice to use charts or graphs to visualize the results of studies. Generally, the independent variable goes on the x -axis (horizontal) and the dependent variable on the y -axis (vertical).

The type of visualization you use depends on the variable types in your research questions:

- A bar chart is ideal when you have a categorical independent variable.

- A scatter plot or line graph is best when your independent and dependent variables are both quantitative.

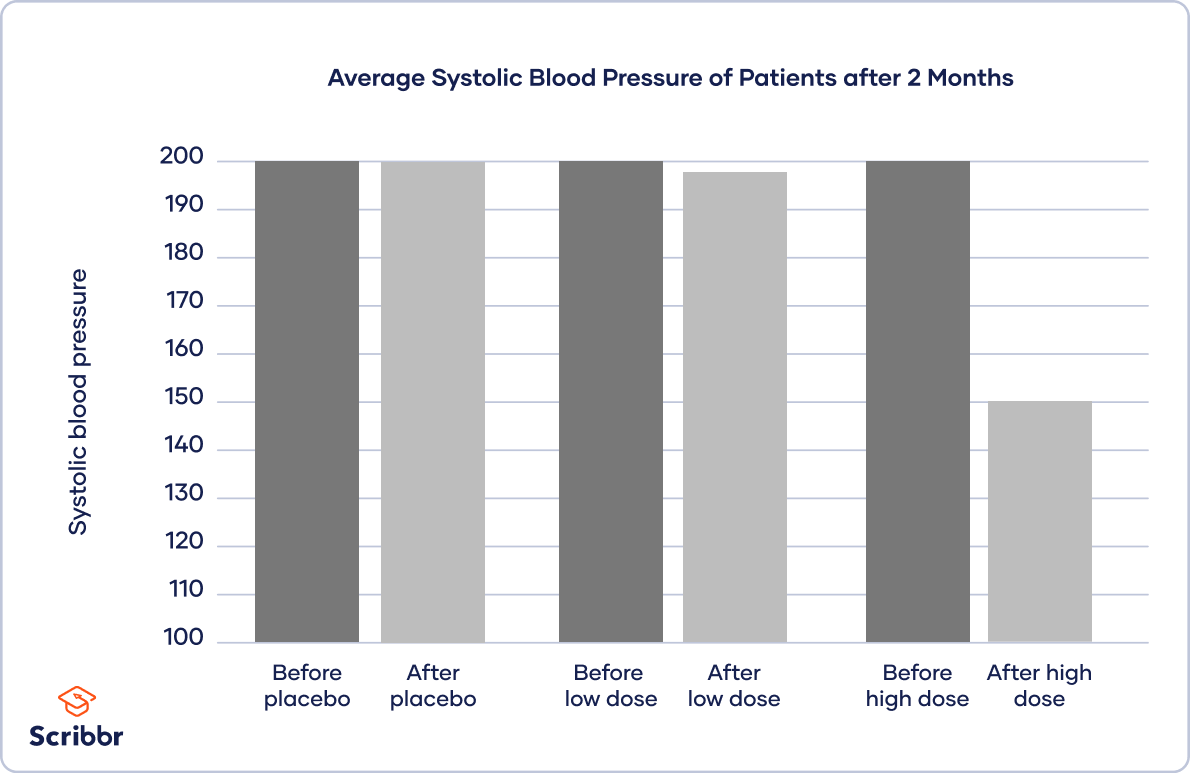

To inspect your data, you place your independent variable of treatment level on the x -axis and the dependent variable of blood pressure on the y -axis.

You plot bars for each treatment group before and after the treatment to show the difference in blood pressure.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Normal distribution

- Degrees of freedom

- Null hypothesis

- Discourse analysis

- Control groups

- Mixed methods research

- Non-probability sampling

- Quantitative research

- Ecological validity

Research bias

- Rosenthal effect

- Implicit bias

- Cognitive bias

- Selection bias

- Negativity bias

- Status quo bias

An independent variable is the variable you manipulate, control, or vary in an experimental study to explore its effects. It’s called “independent” because it’s not influenced by any other variables in the study.

A dependent variable is what changes as a result of the independent variable manipulation in experiments . It’s what you’re interested in measuring, and it “depends” on your independent variable.

In statistics, dependent variables are also called:

Determining cause and effect is one of the most important parts of scientific research. It’s essential to know which is the cause – the independent variable – and which is the effect – the dependent variable.

You want to find out how blood sugar levels are affected by drinking diet soda and regular soda, so you conduct an experiment .

- The type of soda – diet or regular – is the independent variable .

- The level of blood sugar that you measure is the dependent variable – it changes depending on the type of soda.

No. The value of a dependent variable depends on an independent variable, so a variable cannot be both independent and dependent at the same time. It must be either the cause or the effect, not both!

Yes, but including more than one of either type requires multiple research questions .

For example, if you are interested in the effect of a diet on health, you can use multiple measures of health: blood sugar, blood pressure, weight, pulse, and many more. Each of these is its own dependent variable with its own research question.

You could also choose to look at the effect of exercise levels as well as diet, or even the additional effect of the two combined. Each of these is a separate independent variable .

To ensure the internal validity of an experiment , you should only change one independent variable at a time.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Bhandari, P. (2023, June 22). Independent vs. Dependent Variables | Definition & Examples. Scribbr. Retrieved September 3, 2024, from https://www.scribbr.com/methodology/independent-and-dependent-variables/

Is this article helpful?

Pritha Bhandari

Other students also liked, guide to experimental design | overview, steps, & examples, explanatory and response variables | definitions & examples, confounding variables | definition, examples & controls, "i thought ai proofreading was useless but..".

I've been using Scribbr for years now and I know it's a service that won't disappoint. It does a good job spotting mistakes”

Research Variables 101

Independent variables, dependent variables, control variables and more

By: Derek Jansen (MBA) | Expert Reviewed By: Kerryn Warren (PhD) | January 2023

If you’re new to the world of research, especially scientific research, you’re bound to run into the concept of variables , sooner or later. If you’re feeling a little confused, don’t worry – you’re not the only one! Independent variables, dependent variables, confounding variables – it’s a lot of jargon. In this post, we’ll unpack the terminology surrounding research variables using straightforward language and loads of examples .

Overview: Variables In Research

| 1. ? 2. variables 3. variables 4. variables | 5. variables |

What (exactly) is a variable?

The simplest way to understand a variable is as any characteristic or attribute that can experience change or vary over time or context – hence the name “variable”. For example, the dosage of a particular medicine could be classified as a variable, as the amount can vary (i.e., a higher dose or a lower dose). Similarly, gender, age or ethnicity could be considered demographic variables, because each person varies in these respects.

Within research, especially scientific research, variables form the foundation of studies, as researchers are often interested in how one variable impacts another, and the relationships between different variables. For example:

- How someone’s age impacts their sleep quality

- How different teaching methods impact learning outcomes

- How diet impacts weight (gain or loss)

As you can see, variables are often used to explain relationships between different elements and phenomena. In scientific studies, especially experimental studies, the objective is often to understand the causal relationships between variables. In other words, the role of cause and effect between variables. This is achieved by manipulating certain variables while controlling others – and then observing the outcome. But, we’ll get into that a little later…

The “Big 3” Variables

Variables can be a little intimidating for new researchers because there are a wide variety of variables, and oftentimes, there are multiple labels for the same thing. To lay a firm foundation, we’ll first look at the three main types of variables, namely:

- Independent variables (IV)

- Dependant variables (DV)

- Control variables

What is an independent variable?

Simply put, the independent variable is the “ cause ” in the relationship between two (or more) variables. In other words, when the independent variable changes, it has an impact on another variable.

For example:

- Increasing the dosage of a medication (Variable A) could result in better (or worse) health outcomes for a patient (Variable B)

- Changing a teaching method (Variable A) could impact the test scores that students earn in a standardised test (Variable B)

- Varying one’s diet (Variable A) could result in weight loss or gain (Variable B).

It’s useful to know that independent variables can go by a few different names, including, explanatory variables (because they explain an event or outcome) and predictor variables (because they predict the value of another variable). Terminology aside though, the most important takeaway is that independent variables are assumed to be the “cause” in any cause-effect relationship. As you can imagine, these types of variables are of major interest to researchers, as many studies seek to understand the causal factors behind a phenomenon.

Need a helping hand?

What is a dependent variable?

While the independent variable is the “ cause ”, the dependent variable is the “ effect ” – or rather, the affected variable . In other words, the dependent variable is the variable that is assumed to change as a result of a change in the independent variable.

Keeping with the previous example, let’s look at some dependent variables in action:

- Health outcomes (DV) could be impacted by dosage changes of a medication (IV)

- Students’ scores (DV) could be impacted by teaching methods (IV)

- Weight gain or loss (DV) could be impacted by diet (IV)

In scientific studies, researchers will typically pay very close attention to the dependent variable (or variables), carefully measuring any changes in response to hypothesised independent variables. This can be tricky in practice, as it’s not always easy to reliably measure specific phenomena or outcomes – or to be certain that the actual cause of the change is in fact the independent variable.

As the adage goes, correlation is not causation . In other words, just because two variables have a relationship doesn’t mean that it’s a causal relationship – they may just happen to vary together. For example, you could find a correlation between the number of people who own a certain brand of car and the number of people who have a certain type of job. Just because the number of people who own that brand of car and the number of people who have that type of job is correlated, it doesn’t mean that owning that brand of car causes someone to have that type of job or vice versa. The correlation could, for example, be caused by another factor such as income level or age group, which would affect both car ownership and job type.

To confidently establish a causal relationship between an independent variable and a dependent variable (i.e., X causes Y), you’ll typically need an experimental design , where you have complete control over the environmen t and the variables of interest. But even so, this doesn’t always translate into the “real world”. Simply put, what happens in the lab sometimes stays in the lab!

As an alternative to pure experimental research, correlational or “ quasi-experimental ” research (where the researcher cannot manipulate or change variables) can be done on a much larger scale more easily, allowing one to understand specific relationships in the real world. These types of studies also assume some causality between independent and dependent variables, but it’s not always clear. So, if you go this route, you need to be cautious in terms of how you describe the impact and causality between variables and be sure to acknowledge any limitations in your own research.

What is a control variable?

In an experimental design, a control variable (or controlled variable) is a variable that is intentionally held constant to ensure it doesn’t have an influence on any other variables. As a result, this variable remains unchanged throughout the course of the study. In other words, it’s a variable that’s not allowed to vary – tough life 🙂

As we mentioned earlier, one of the major challenges in identifying and measuring causal relationships is that it’s difficult to isolate the impact of variables other than the independent variable. Simply put, there’s always a risk that there are factors beyond the ones you’re specifically looking at that might be impacting the results of your study. So, to minimise the risk of this, researchers will attempt (as best possible) to hold other variables constant . These factors are then considered control variables.

Some examples of variables that you may need to control include:

- Temperature

- Time of day

- Noise or distractions

Which specific variables need to be controlled for will vary tremendously depending on the research project at hand, so there’s no generic list of control variables to consult. As a researcher, you’ll need to think carefully about all the factors that could vary within your research context and then consider how you’ll go about controlling them. A good starting point is to look at previous studies similar to yours and pay close attention to which variables they controlled for.

Of course, you won’t always be able to control every possible variable, and so, in many cases, you’ll just have to acknowledge their potential impact and account for them in the conclusions you draw. Every study has its limitations , so don’t get fixated or discouraged by troublesome variables. Nevertheless, always think carefully about the factors beyond what you’re focusing on – don’t make assumptions!

Other types of variables

As we mentioned, independent, dependent and control variables are the most common variables you’ll come across in your research, but they’re certainly not the only ones you need to be aware of. Next, we’ll look at a few “secondary” variables that you need to keep in mind as you design your research.

- Moderating variables

- Mediating variables

- Confounding variables

- Latent variables

Let’s jump into it…

What is a moderating variable?

A moderating variable is a variable that influences the strength or direction of the relationship between an independent variable and a dependent variable. In other words, moderating variables affect how much (or how little) the IV affects the DV, or whether the IV has a positive or negative relationship with the DV (i.e., moves in the same or opposite direction).

For example, in a study about the effects of sleep deprivation on academic performance, gender could be used as a moderating variable to see if there are any differences in how men and women respond to a lack of sleep. In such a case, one may find that gender has an influence on how much students’ scores suffer when they’re deprived of sleep.

It’s important to note that while moderators can have an influence on outcomes , they don’t necessarily cause them ; rather they modify or “moderate” existing relationships between other variables. This means that it’s possible for two different groups with similar characteristics, but different levels of moderation, to experience very different results from the same experiment or study design.

What is a mediating variable?

Mediating variables are often used to explain the relationship between the independent and dependent variable (s). For example, if you were researching the effects of age on job satisfaction, then education level could be considered a mediating variable, as it may explain why older people have higher job satisfaction than younger people – they may have more experience or better qualifications, which lead to greater job satisfaction.

Mediating variables also help researchers understand how different factors interact with each other to influence outcomes. For instance, if you wanted to study the effect of stress on academic performance, then coping strategies might act as a mediating factor by influencing both stress levels and academic performance simultaneously. For example, students who use effective coping strategies might be less stressed but also perform better academically due to their improved mental state.

In addition, mediating variables can provide insight into causal relationships between two variables by helping researchers determine whether changes in one factor directly cause changes in another – or whether there is an indirect relationship between them mediated by some third factor(s). For instance, if you wanted to investigate the impact of parental involvement on student achievement, you would need to consider family dynamics as a potential mediator, since it could influence both parental involvement and student achievement simultaneously.

What is a confounding variable?

A confounding variable (also known as a third variable or lurking variable ) is an extraneous factor that can influence the relationship between two variables being studied. Specifically, for a variable to be considered a confounding variable, it needs to meet two criteria:

- It must be correlated with the independent variable (this can be causal or not)

- It must have a causal impact on the dependent variable (i.e., influence the DV)

Some common examples of confounding variables include demographic factors such as gender, ethnicity, socioeconomic status, age, education level, and health status. In addition to these, there are also environmental factors to consider. For example, air pollution could confound the impact of the variables of interest in a study investigating health outcomes.

Naturally, it’s important to identify as many confounding variables as possible when conducting your research, as they can heavily distort the results and lead you to draw incorrect conclusions . So, always think carefully about what factors may have a confounding effect on your variables of interest and try to manage these as best you can.

What is a latent variable?

Latent variables are unobservable factors that can influence the behaviour of individuals and explain certain outcomes within a study. They’re also known as hidden or underlying variables , and what makes them rather tricky is that they can’t be directly observed or measured . Instead, latent variables must be inferred from other observable data points such as responses to surveys or experiments.

For example, in a study of mental health, the variable “resilience” could be considered a latent variable. It can’t be directly measured , but it can be inferred from measures of mental health symptoms, stress, and coping mechanisms. The same applies to a lot of concepts we encounter every day – for example:

- Emotional intelligence

- Quality of life

- Business confidence

- Ease of use

One way in which we overcome the challenge of measuring the immeasurable is latent variable models (LVMs). An LVM is a type of statistical model that describes a relationship between observed variables and one or more unobserved (latent) variables. These models allow researchers to uncover patterns in their data which may not have been visible before, thanks to their complexity and interrelatedness with other variables. Those patterns can then inform hypotheses about cause-and-effect relationships among those same variables which were previously unknown prior to running the LVM. Powerful stuff, we say!

Let’s recap

In the world of scientific research, there’s no shortage of variable types, some of which have multiple names and some of which overlap with each other. In this post, we’ve covered some of the popular ones, but remember that this is not an exhaustive list .

To recap, we’ve explored:

- Independent variables (the “cause”)

- Dependent variables (the “effect”)

- Control variables (the variable that’s not allowed to vary)

If you’re still feeling a bit lost and need a helping hand with your research project, check out our 1-on-1 coaching service , where we guide you through each step of the research journey. Also, be sure to check out our free dissertation writing course and our collection of free, fully-editable chapter templates .

Psst... there’s more!

This post was based on one of our popular Research Bootcamps . If you're working on a research project, you'll definitely want to check this out ...

Very informative, concise and helpful. Thank you

Helping information.Thanks

practical and well-demonstrated

Very helpful and insightful

Submit a Comment Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

- Print Friendly

Educational resources and simple solutions for your research journey

Independent vs Dependent Variables: Definitions & Examples

A variable is an important element of research. It is a characteristic, number, or quantity of any category that can be measured or counted and whose value may change with time or other parameters.

Variables are defined in different ways in different fields. For instance, in mathematics, a variable is an alphabetic character that expresses a numerical value. In algebra, a variable represents an unknown entity, mostly denoted by a, b, c, x, y, z, etc. In statistics, variables represent real-world conditions or factors. Despite the differences in definitions, in all fields, variables represent the entity that changes and help us understand how one factor may or may not influence another factor.

Variables in research and statistics are of different types—independent, dependent, quantitative (discrete or continuous), qualitative (nominal/categorical, ordinal), intervening, moderating, extraneous, confounding, control, and composite. In this article we compare the first two types— independent vs dependent variables .

Table of Contents

What is a variable?

Researchers conduct experiments to understand the cause-and-effect relationships between various entities. In such experiments, the entities whose values change are called variables. These variables describe the relationships among various factors and help in drawing conclusions in experiments. They help in understanding how some factors influence others. Some examples of variables include age, gender, race, income, weight, etc.

As mentioned earlier, different types of variables are used in research. Of these, we will compare the most common types— independent vs dependent variables . The independent variable is the cause and the dependent variable is the effect, that is, independent variables influence dependent variables. In research, a dependent variable is the outcome of interest of the study and the independent variable is the factor that may influence the outcome. Let’s explain this with an independent and dependent variable example : In a study to analyze the effect of antibiotic use on microbial resistance, antibiotic use is the independent variable and microbial resistance is the dependent variable because antibiotic use affects microbial resistance.( 1)

What is an independent variable?

Here is a list of the important characteristics of independent variables .( 2,3)

- An independent variable is the factor that is being manipulated in an experiment.

- In a research study, independent variables affect or influence dependent variables and cause them to change.

- Independent variables help gather evidence and draw conclusions about the research subject.

- They’re also called predictors, factors, treatment variables, explanatory variables, and input variables.

- On graphs, independent variables are usually placed on the X-axis.

- Example: In a study on the relationship between screen time and sleep problems, screen time is the independent variable because it influences sleep (the dependent variable).

- In addition, some factors like age are independent variables because other variables such as a person’s income will not change their age.

Types of independent variables

Independent variables in research are of the following two types:( 4)

Quantitative

Quantitative independent variables differ in amounts or scales. They are numeric and answer questions like “how many” or “how often.”

Here are a few quantitative independent variables examples :

- Differences in treatment dosages and frequencies: Useful in determining the appropriate dosage to get the desired outcome.

- Varying salinities: Useful in determining the range of salinity that organisms can tolerate.

Qualitative

Qualitative independent variables are non-numerical variables.

A few qualitative independent variables examples are listed below:

- Different strains of a species: Useful in identifying the strain of a crop that is most resistant to a specific disease.

- Varying methods of how a treatment is administered—oral or intravenous.

A quantitative variable is represented by actual amounts and a qualitative variable by categories or groups.

What is a dependent variable ?

Here are a few characteristics of dependent variables: ( 3)

- A dependent variable represents a quantity whose value depends on the independent variable and how it is changed.

- The dependent variable is influenced by the independent variable under various circumstances.

- It is also known as the response variable and outcome variable.

- On graphs, dependent variables are placed on the Y-axis.

Here are a few dependent variable examples :

- In a study on the effect of exercise on mood, the dependent variable is mood because it may change with exercise.

- In a study on the effect of pH on enzyme activity, the enzyme activity is the dependent variable because it changes with changing pH.

Types of dependent variables

Dependent variables are of two types:( 5)

Continuous dependent variables

These variables can take on any value within a given range and are measured on a continuous scale, for example, weight, height, temperature, time, distance, etc.

Categorical or discrete dependent variables

These variables are divided into distinct categories. They are not measured on a continuous scale so only a limited number of values are possible, for example, gender, race, etc.

Differences between independent and dependent variables

The following table compares independent vs dependent variables .

| How to identify | Manipulated or controlled | Observed or measured |

| Purpose | Cause or predictor variable | Outcome or response variable |

| Relationship | Independent of other variables | Influenced by the independent variable |

| Control | Manipulated or assigned by researcher | Measured or observed during experiments |

Independent and dependent variable examples

Listed below are a few examples of research questions from various disciplines and their corresponding independent and dependent variables.( 6)

| Genetics | What is the relationship between genetics and susceptibility to diseases? | genetic factors | susceptibility to diseases |

| History | How do historical events influence national identity? | historical events | national identity |

| Political science | What is the effect of political campaign advertisements on voter behavior? | political campaign advertisements | voter behavior |

| Sociology | How does social media influence cultural awareness? | social media exposure | cultural awareness |

| Economics | What is the impact of economic policies on unemployment rates? | economic policies | unemployment rates |

| Literature | How does literary criticism affect book sales? | literary criticism | book sales |

| Geology | How do a region’s geological features influence the magnitude of earthquakes? | geological features | earthquake magnitudes |

| Environment | How do changes in climate affect wildlife migration patterns? | climate changes | wildlife migration patterns |

| Gender studies | What is the effect of gender bias in the workplace on job satisfaction? | gender bias | job satisfaction |

| Film studies | What is the relationship between cinematographic techniques and viewer engagement? | cinematographic techniques | viewer engagement |

| Archaeology | How does archaeological tourism affect local communities? | archaeological techniques | local community development |

Independent vs dependent variables in research

Experiments usually have at least two variables—independent and dependent. The independent variable is the entity that is being tested and the dependent variable is the result. Classifying independent and dependent variables as discrete and continuous can help in determining the type of analysis that is appropriate in any given research experiment, as shown in the table below. ( 7)

| Chi-Square | t-test | ||

| Logistic regression | ANOVA | ||

| Phi | Regression | ||

| Cramer’s V | Point-biserial correlation | ||

| Logistic regression | Regression | ||

| Point-biserial correlation | Correlation | ||

Here are some more research questions and their corresponding independent and dependent variables. ( 6)

| What is the impact of online learning platforms on academic performance? | type of learning | academic performance |

| What is the association between exercise frequency and mental health? | exercise frequency | mental health |

| How does smartphone use affect productivity? | smartphone use | productivity levels |

| Does family structure influence adolescent behavior? | family structure | adolescent behavior |

| What is the impact of nonverbal communication on job interviews? | nonverbal communication | job interviews |

How to identify independent vs dependent variables

In addition to all the characteristics of independent and dependent variables listed previously, here are few simple steps to identify the variable types in a research question.( 8)

- Keep in mind that there are no specific words that will always describe dependent and independent variables.

- If you’re given a paragraph, convert that into a question and identify specific words describing cause and effect.

- The word representing the cause is the independent variable and that describing the effect is the dependent variable.

Let’s try out these steps with an example.

A researcher wants to conduct a study to see if his new weight loss medication performs better than two bestseller alternatives. He wants to randomly select 20 subjects from Richmond, Virginia, aged 20 to 30 years and weighing above 60 pounds. Each subject will be randomly assigned to three treatment groups.

To identify the independent and dependent variables, we convert this paragraph into a question, as follows: Does the new medication perform better than the alternatives? Here, the medications are the independent variable and their performances or effect on the individuals are the dependent variable.

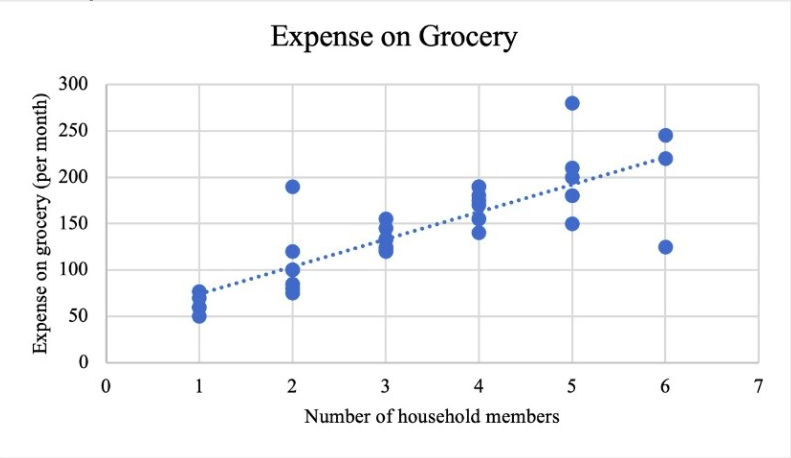

Visualizing independent vs dependent variables

Data visualization is the graphical representation of information by using charts, graphs, and maps. Visualizations help in making data more understandable by making it easier to compare elements, identify trends and relationships (among variables), among other functions.

Bar graphs, pie charts, and scatter plots are the best methods to graphically represent variables. While pie charts and bar graphs are suitable for depicting categorical data, scatter plots are appropriate for quantitative data. The independent variable is usually placed on the X-axis and the dependent variable on the Y-axis.

Figure 1 is a scatter plot that depicts the relationship between the number of household members and their monthly grocery expenses. 9 The number of household members is the independent variable and the expenses the dependent variable. The graph shows that as the number of members increases the expenditure also increases.

Key takeaways

Let’s summarize the key takeaways about independent vs dependent variables from this article:

- A variable is any entity being measured in a study.

- A dependent variable is often the focus of a research study and is the response or outcome. It depends on or varies with changes in other variables.

- Independent variables cause changes in dependent variables and don’t depend on other variables.

- An independent variable can influence a dependent variable, but a dependent variable cannot influence an independent variable.

- An independent variable is the cause and dependent variable is the effect.

Frequently asked questions

- What are the different types of variables used in research?

The following table lists the different types of variables used in research.( 10)

| Categorical | Measures a construct that has different categories | gender, race, religious affiliation, political affiliation |

| Quantitative | Measures constructs that vary by degree of the amount | weight, height, age, intelligence scores |

| Independent (IV) | Measures constructs considered to be the cause | Higher education (IV) leads to higher income (DV) |

| Dependent (DV) | Measures constructs that are considered the effect | Exercise (IV) will reduce anxiety levels (DV) |

| Intervening or mediating (MV) | Measures constructs that intervene or stand in between the cause and effect | Incarcerated individuals are more likely to have psychiatric disorder (MV), which leads to disability in social roles |

| Confounding (CV) | “Rival explanations” that explain the cause-and-effect relationship | Age (CV) explains the relationship between increased shoe size and increase in intelligence in children |

| Control variable | Extraneous variables whose influence can be controlled or eliminated | Demographic data such as gender, socioeconomic status, age |

2. Why is it important to differentiate between independent vs dependent variables ?

Differentiating between independent vs dependent variables is important to ensure the correct application in your own research and also the correct understanding of other studies. An incorrectly framed research question can lead to confusion and inaccurate results. An easy way to differentiate is to identify the cause and effect.

3. How are independent and dependent variables used in non-experimental research?

So far in this article we talked about variables in relation to experimental research, wherein variables are manipulated or measured to test a hypothesis, that is, to observe the effect on dependent variables. Let’s examine non-experimental research and how variable are used. 11 In non-experimental research, variables are not manipulated but are observed in their natural state. Researchers do not have control over the variables and cannot manipulate them based on their research requirements. For example, a study examining the relationship between income and education level would not manipulate either variable. Instead, the researcher would observe and measure the levels of each variable in the sample population. The level of control researchers have is the major difference between experimental and non-experimental research. Another difference is the causal relationship between the variables. In non-experimental research, it is not possible to establish a causal relationship because other variables may be influencing the outcome.

4. Are there any advantages and disadvantages of using independent vs dependent variables ?

Here are a few advantages and disadvantages of both independent and dependent variables.( 12)

Advantages:

- Dependent variables are not liable to any form of bias because they cannot be manipulated by researchers or other external factors.

- Independent variables are easily obtainable and don’t require complex mathematical procedures to be observed, like dependent variables. This is because researchers can easily manipulate these variables or collect the data from respondents.

- Some independent variables are natural factors and cannot be manipulated. They are also easily obtainable because less time is required for data collection.

Disadvantages:

- Obtaining dependent variables is a very expensive and effort- and time-intensive process because these variables are obtained from longitudinal research by solving complex equations.

- Independent variables are prone to researcher and respondent bias because they can be manipulated, and this may affect the study results.

We hope this article has provided you with an insight into the use and importance of independent vs dependent variables , which can help you effectively use variables in your next research study.

- Kaliyadan F, Kulkarni V. Types of variables, descriptive statistics, and sample size. Indian Dermatol Online J. 2019 Jan-Feb; 10(1): 82–86. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6362742/

- What Is an independent variable? (with uses and examples). Indeed website. Accessed March 11, 2024. https://www.indeed.com/career-advice/career-development/what-is-independent-variable

- Independent and dependent variables: Differences & examples. Statistics by Jim website. Accessed March 10, 2024. https://statisticsbyjim.com/regression/independent-dependent-variables/

- Independent variable. Biology online website. Accessed March 9, 2024. https://www.biologyonline.com/dictionary/independent-variable#:~:text=The%20independent%20variable%20in%20research,how%20many%20or%20how%20often .

- Dependent variables: Definition and examples. Clubz Tutoring Services website. Accessed March 10, 2024. https://clubztutoring.com/ed-resources/math/dependent-variable-definitions-examples-6-7-2/

- Research topics with independent and dependent variables. Good research topics website. Accessed March 12, 2024. https://goodresearchtopics.com/research-topics-with-independent-and-dependent-variables/

- Levels of measurement and using the correct statistical test. Univariate quantitative methods. Accessed March 14, 2024. https://web.pdx.edu/~newsomj/uvclass/ho_levels.pdf

- Easiest way to identify dependent and independent variables. Afidated website. Accessed March 15, 2024. https://www.afidated.com/2014/07/how-to-identify-dependent-and.html

- Choosing data visualizations. Math for the people website. Accessed March 14, 2024. https://web.stevenson.edu/mbranson/m4tp/version1/environmental-racism-choosing-data-visualization.html

- Trivedi C. Types of variables in scientific research. Concepts Hacked website. Accessed March 15, 2024. https://conceptshacked.com/variables-in-scientific-research/

- Variables in experimental and non-experimental research. Statistics solutions website. Accessed March 14, 2024. https://www.statisticssolutions.com/variables-in-experimental-and-non-experimental-research/#:~:text=The%20independent%20variable%20would%20be,state%20instead%20of%20manipulating%20them .

- Dependent vs independent variables: 11 key differences. Formplus website. Accessed March 15, 2024. https://www.formpl.us/blog/dependent-independent-variables

Editage All Access is a subscription-based platform that unifies the best AI tools and services designed to speed up, simplify, and streamline every step of a researcher’s journey. The Editage All Access Pack is a one-of-a-kind subscription that unlocks full access to an AI writing assistant, literature recommender, journal finder, scientific illustration tool, and exclusive discounts on professional publication services from Editage.

Based on 22+ years of experience in academia, Editage All Access empowers researchers to put their best research forward and move closer to success. Explore our top AI Tools pack, AI Tools + Publication Services pack, or Build Your Own Plan. Find everything a researcher needs to succeed, all in one place – Get All Access now starting at just $14 a month !

Related Posts

Back to School – Lock-in All Access Pack for a Year at the Best Price

Journal Turnaround Time: Researcher.Life and Scholarly Intelligence Join Hands to Empower Researchers with Publication Time Insights

Variables in Quantitative Research: A Beginner's Guide – SOBT

Quantitative variables.

Because quantitative methodology requires measurement, the concepts being investigated need to be defined in a way that can be measured. Organizational change, reading comprehension, emergency response, or depression are concepts but they cannot be measured as such. Frequency of organizational change, reading comprehension scores, emergency response time, or types of depression can be measured. They are variables (concepts that can vary).

Quantitative research involves many kinds of variables. There are four main types:

- Independent variables (IV).

- Dependent variables (DV).

- Sample variables.

- Extraneous variables.

Each is discussed below.

Independent Variables (IV)

Independent variables (IV) are those that are suspected of being the cause in a causal relationship. If you are asking a cause and effect question, your IV will be the variable (or variables if more than one) that you suspect causes the effect.

There are two main sorts of IV, active independent variables and attribute independent variables:

- Active IV are interventions or conditions that are being applied to the participants. A special tutorial for the third graders, a new therapy for clients, or a new training program being tested on employees would be active IVs.

- Attribute IV are intrinsic characteristics of the participants that are suspected of causing a result. For example, if you are examining whether gender—which is intrinsic to the participants—results in higher or lower scores on some skill, gender is an attribute IV.

- Both types of IV can have what are called levels. For example:

- In the example above, the active IV special tutorial , receiving the tutorial is one level, and tutorial withheld (control) is a second level.

- In the same example, being a third grader would be an attribute IV. It could be defined as only one level—being in third grade—or you might wish to define it with more than one level, such as first half of third grade and second half of third grade. Indeed, that attribute IV could take many more, for example, if you wished to look at each month of third grade.

Independent variables are frequently called different things depending on the nature of the research question. In predictive questions where a variable is thought to predict another but it is not yet appropriate to ask whether it causes the other, the IV is usually called a predictor or criterion variable rather than an independent variable.

Dependent Variables (DV)

Dependent variables are those that are influenced by the independent variables. If you ask,"Does A cause [or predict or influence or affect, and so on] B?," then B is the dependent variable (DV).

- Dependent variables are variables that depend on or are influenced by the independent variables.

- They are outcomes or results of the influence of the independent variable.

- Dependent variables answer the question: What do I observe happening when I apply the intervention?

- The dependent variable receives the intervention.

In questions where full causation is not assumed, such as a predictive question or a question about differences between groups but no manipulation of an IV, the dependent variables are usually called outcome variables , and the independent variables are usually called the predictor or criterion variables.

Sample Variables

In some studies, some characteristic of the participants must be measured for some reason, but that characteristic is not the IV or the DV. In this case, these are called sample variables. For example, suppose you are investigating whether servant leadership style affects organizational performance and successful financial outcomes. In order to obtain a sample of servant leaders, a standard test of leadership style will be administered. So the presence or absence of servant leadership style will be a sample variable. That score is not used as an IV or a DV, but simply to get the appropriate people into the sample.

When there is no measure of a characteristic of the participants, the characteristic is called a "sample characteristic." When the characteristic must be measured, it is called a "sample variable."

Extraneous Variables

Extraneous variables are not of interest to the study but may influence the dependent variable. For this reason, most quantitative studies attempt to control extraneous variables. The literature should inform you what extraneous variables to account for.

There is a special class of extraneous variables called confounding variables. These are variables that can cause the effect we are looking for if they are not controlled for, resulting in a false finding that the IV is effective when it is not. In a study of changes in skill levels in a group of workers after a training program, if the follow-up measure is taken relatively late after the training, the simple effect of practicing the skills might explain improved scores, and the training might be mistakenly thought to be successful when it was not.

There are many details about variables not covered in this handout. Please consult any text on research methods for a more comprehensive review.

Doc. reference: phd_t2_sobt_u02s2_h01_quantvar.html.html

Independent and Dependent Variables

This guide discusses how to identify independent and dependent variables effectively and incorporate their description within the body of a research paper.

A variable can be anything you might aim to measure in your study, whether in the form of numerical data or reflecting complex phenomena such as feelings or reactions. Dependent variables change due to the other factors measured, especially if a study employs an experimental or semi-experimental design. Independent variables are stable: they are both presumed causes and conditions in the environment or milieu being manipulated.

Identifying Independent and Dependent Variables

Even though the definitions of the terms independent and dependent variables may appear to be clear, in the process of analyzing data resulting from actual research, identifying the variables properly might be challenging. Here is a simple rule that you can apply at all times: the independent variable is what a researcher changes, whereas the dependent variable is affected by these changes. To illustrate the difference, a number of examples are provided below.

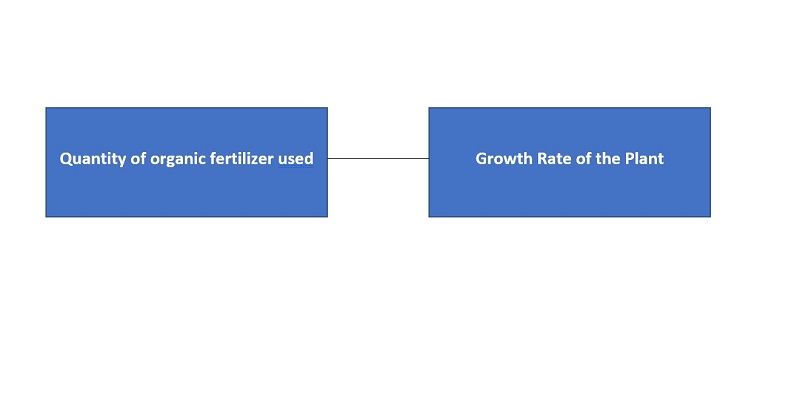

- The purpose of Study 1 is to measure the impact of different plant fertilizers on how many fruits apple trees bear. Independent variable : plant fertilizers (chosen by researchers) Dependent variable : fruits that the trees bear (affected by choice of fertilizers)

- The purpose of Study 2 is to find an association between living in close vicinity to hydraulic fracturing sites and respiratory diseases. Independent variable: proximity to hydraulic fracturing sites (a presumed cause and a condition of the environment) Dependent variable: the percentage/ likelihood of suffering from respiratory diseases

Confusion is possible in identifying independent and dependent variables in the social sciences. When considering psychological phenomena and human behavior, it can be difficult to distinguish between cause and effect. For example, the purpose of Study 3 is to establish how tactics for coping with stress are linked to the level of stress-resilience in college students. Even though it is feasible to speculate that these variables are interdependent, the following factors should be taken into account in order to clearly define which variable is dependent and which is interdependent.

- The dependent variable is usually the objective of the research. In the study under examination, the levels of stress resilience are being investigated.

- The independent variable precedes the dependent variable. The chosen stress-related coping techniques help to build resilience; thus, they occur earlier.

Writing Style and Structure

Usually, the variables are first described in the introduction of a research paper and then in the method section. No strict guidelines for approaching the subject exist; however, academic writing demands that the researcher make clear and concise statements. It is only reasonable not to leave readers guessing which of the variables is dependent and which is independent. The description should reflect the literature review, where both types of variables are identified in the context of the previous research. For instance, in the case of Study 3, a researcher would have to provide an explanation as to the meaning of stress resilience and coping tactics.

In properly organizing a research paper, it is essential to outline and operationalize the appropriate independent and dependent variables. Moreover, the paper should differentiate clearly between independent and dependent variables. Finding the dependent variable is typically the objective of a study, whereas independent variables reflect influencing factors that can be manipulated. Distinguishing between the two types of variables in social sciences may be somewhat challenging as it can be easy to confuse cause with effect. Academic format calls for the author to mention the variables in the introduction and then provide a detailed description in the method section.

Unfortunately, your browser is too old to work on this site.

For full functionality of this site it is necessary to enable JavaScript.

- Thesis Action Plan New

- Academic Project Planner

Literature Navigator

Thesis dialogue blueprint, writing wizard's template, research proposal compass.

- Why students love us

- Rebels Blog

- Why we are different

- All Products

- Coming Soon

Operationalize a Variable: A Step-by-Step Guide to Quantifying Your Research Constructs

Operationalizing a variable is a fundamental step in transforming abstract research constructs into measurable entities. This process allows researchers to quantify variables, enabling the empirical testing of hypotheses within quantitative research. The guide provided here aims to demystify the operationalization process with a structured approach, equipping scholars with the tools to translate theoretical concepts into practical, quantifiable measures.

Key Takeaways

- Operationalization is crucial for converting theoretical constructs into measurable variables, forming the backbone of empirical research.

- Identifying the right variables involves distinguishing between constructs and variables, and selecting those that align with the research objectives.

- The validity and reliability of measurements are ensured by choosing appropriate measurement instruments and calibrating them for consistency.

- Quantitative analysis of qualitative data requires careful operationalization to maintain the integrity and applicability of research findings.

- Operationalization impacts research outcomes by influencing study validity, generalizability, and contributing to the academic field's advancement.

Understanding the Concept of Operationalization in Research

Defining operationalization.

Operationalization is the cornerstone of quantitative research, transforming abstract concepts into measurable entities. It is the process by which you translate theoretical constructs into variables that can be empirically measured. This crucial step allows you to quantify the phenomena of interest, paving the way for systematic investigation and analysis.

To operationalize a variable effectively, you must first clearly define the construct and then determine the specific ways in which it can be observed and quantified. For instance, if you're studying the concept of 'anxiety,' you might operationalize it by measuring heart rate, self-reported stress levels, or the frequency of anxiety-related behaviors.

Consider the following aspects when operationalizing your variables:

- The type of variable (e.g., binary, continuous, categorical)

- The units of measurement (e.g., dollars, frequency, Likert scale)

- The method of data collection (e.g., surveys, observations, physiological measures)

By meticulously defining and measuring your variables, you ensure that your research can be rigorously tested and validated, contributing to the robustness and credibility of your findings.

The Role of Operationalization in Quantitative Research

In quantitative research, operationalization is the cornerstone that bridges the gap between abstract concepts and measurable outcomes. It involves defining your research variables in practical, quantifiable terms, allowing for precise data collection and analysis. Operationalization transforms theoretical constructs into indicators that can be empirically tested , ensuring that your study can be objectively evaluated against your hypotheses.

Operationalization is not just about measurement, but about the meaning behind the numbers. It requires careful consideration to select the most appropriate indicators for your variables. For instance, if you're studying educational achievement, you might operationalize this as GPA, standardized test scores, or graduation rates. Each choice has implications for what aspect of 'achievement' you're measuring:

- GPA reflects consistent performance across a variety of subjects.

- Standardized test scores may indicate aptitude in specific areas.

- Graduation rates can signify the completion of an educational milestone.

By operationalizing variables effectively, you lay the groundwork for a robust quantitative study. This process ensures that your research can be replicated and that your findings contribute meaningfully to the existing body of knowledge.

Differences Between Endogenous and Exogenous Variables

In the realm of research, understanding the distinction between endogenous and exogenous variables is crucial for designing robust experiments and drawing accurate conclusions. Endogenous variables are those that are influenced within the context of the study, often affected by other variables in the system. In contrast, exogenous variables are external factors that are not influenced by the system under study but can affect endogenous variables.

When operationalizing variables, it is essential to identify which are endogenous and which are exogenous to establish clear causal relationships. Exogenous variables are typically manipulated to observe their effect on endogenous variables, thereby testing hypotheses about causal links. For example, in a study on education outcomes, student motivation might be an endogenous variable, while teaching methods could be an exogenous variable manipulated by the researcher.

Consider the following points to differentiate between these two types of variables:

- Endogenous variables are outcomes within the system, subject to influence by other variables.

- Exogenous variables serve as inputs or causes that can be controlled or manipulated.

- The operationalization of endogenous variables requires careful consideration of how they are measured and how they interact with other variables.

- Exogenous variables, while not requiring operationalization, must be selected with an understanding of their potential impact on the system.

Identifying Variables for Operationalization

Distinguishing between variables and constructs.

In the realm of research, it's crucial to differentiate between variables and constructs. A variable is a specific, measurable characteristic that can vary among participants or over time. Constructs, on the other hand, are abstract concepts that are not directly observable and must be operationalized into measurable variables. For example, intelligence is a construct that can be operationalized by measuring IQ scores, which are variables.

Variables can be classified into different types , each with its own method of measurement. Here's a brief overview of these types:

- Continuous: Can take on any value within a range (e.g., height, weight).

- Ordinal: Represent order without specifying the magnitude of difference (e.g., socioeconomic status levels).

- Nominal: Categories without a specific order (e.g., types of fruit).

- Binary: Two categories, often representing presence or absence (e.g., employed/unemployed).

- Count: The number of occurrences (e.g., number of visits to a website).

When you embark on your research journey, ensure that you clearly identify each construct and the corresponding variable that will represent it in your study. This clarity is the foundation for a robust and credible research design.

Criteria for Selecting Variables

When you embark on the journey of operationalizing variables for your research, it is crucial to apply a systematic approach to variable selection. Variables should be chosen based on their relevance to your research questions and hypotheses , ensuring that they directly contribute to the investigation of your theoretical constructs.

Consider the type of variable you are dealing with—whether it is continuous, ordinal, nominal, binary, or count. Each type has its own implications for how data will be collected and analyzed. For instance, continuous variables allow for a wide range of values, while binary variables are restricted to two possible outcomes. Here is a brief overview of variable types and their characteristics:

- Continuous : Can take on any value within a range

- Ordinal : Values have a meaningful order but intervals are not necessarily equal

- Nominal : Categories without a meaningful order

- Binary : Only two possible outcomes

- Count : Integer values that represent the number of occurrences

Additionally, ensure that the levels of the variable encompass all possible values and that these levels are clearly defined. For binary and ordinal variables, this means specifying the two outcomes or the order of values, respectively. For continuous variables, define the range and consider using categories like 'above X' or 'below Y' if there are no natural bounds to the values.

Lastly, the proxy attribute of the variable should be considered. This refers to the induced variations or treatment conditions in your experiment. For example, if you are studying the effect of a buyer's budget on purchasing decisions, the proxy attribute might include different budget levels such as $5, $10, $20, and $40.

Developing Hypotheses and Research Questions

After grasping the fundamentals of your research domain, the next pivotal step is to develop a clear and concise hypothesis. This hypothesis will serve as the foundation for your experimental design and guide the direction of your study. Formulating a hypothesis requires a deep understanding of the variables at play and their potential interrelations . It's essential to ensure that your hypothesis is testable and that you have a structured plan for how to test it.

Once your hypothesis is established, you'll need to craft research questions that are both specific and measurable. These questions should stem directly from your hypothesis and aim to dissect the larger inquiry into more manageable segments. Here's how to find research question: start by identifying key outcomes and potential causes that might affect these outcomes. Then, design an experiment to induce variation in the causes and measure the outcomes. Remember, the clarity of your research questions will significantly impact the effectiveness of your data analysis later on.

To aid in this process, consider the following steps:

- Synthesize the existing literature to identify gaps and opportunities for further investigation.

- Define a clear problem statement that your research will address.

- Establish a purpose statement that guides your inquiry without advocating for a specific outcome.

- Develop a conceptual and theoretical framework to underpin your research.

- Formulate quantitative and qualitative research questions that align with your hypothesis and frameworks.

Effective experimental design involves identifying variables, establishing hypotheses, choosing sample size, and implementing randomization and control groups to ensure reliable and meaningful research results.

Choosing the Right Measurement Instruments

Types of measurement instruments.

When you embark on the journey of operationalizing your variables, selecting the right measurement instruments is crucial. These instruments are the tools that will translate your theoretical constructs into observable and measurable data. Understanding the different types of measurement instruments is essential for ensuring that your data accurately reflects the constructs you are studying.

Measurement instruments can be broadly categorized into five types: continuous, ordinal, nominal, binary, and count. Each type is suited to different kinds of data and research questions. For instance, a continuous variable, like height, can take on any value within a range, while an ordinal variable represents ordered categories, such as a satisfaction scale.

Here is a brief overview of the types of measurement instruments:

- Continuous : Can take on any value within a range; e.g., temperature, weight.

- Ordinal : Represents ordered categories; e.g., Likert scales for surveys.

- Nominal : Categorizes data without a natural order; e.g., types of fruit, gender.

- Binary : Has only two categories; e.g., yes/no questions, presence/absence.

- Count : Represents the number of occurrences; e.g., the number of visits to a website.

Choosing the appropriate instrument involves considering the nature of your variable, the level of detail required, and the context of your research. For example, if you are measuring satisfaction levels, you might use a Likert scale, which is an ordinal type of instrument. On the other hand, if you are counting the number of times a behavior occurs, a count instrument would be more appropriate.

Ensuring Validity and Reliability

To ensure the integrity of your research, it is crucial to select measurement instruments that are both valid and reliable. Validity refers to the degree to which an instrument accurately measures what it is intended to measure. Reliability, on the other hand, denotes the consistency of the instrument across different instances of measurement.

When choosing your instruments, consider the psychometric properties that have been documented in large cohort studies or previous validations. For instance, scales should have demonstrated internal consistency reliability, which can be assessed using statistical measures such as Cronbach's alpha. It is also important to calibrate your instruments to maintain consistency over time and across various contexts.

Here is a simplified checklist to guide you through the process:

- Review literature for previously validated instruments

- Check for cultural and linguistic validation if applicable

- Assess internal consistency reliability (e.g., Cronbach's alpha)

- Perform pilot testing and calibration

- Plan for ongoing assessment of instrument performance

Calibrating Instruments for Consistency

Calibration is a critical step in ensuring that your measurement instruments yield reliable and consistent results. It involves adjusting the instrument to align with a known standard or set of standards. Calibration must be performed periodically to maintain the integrity of data collection over time.

When calibrating instruments, you should follow a systematic approach. Here is a simple list to guide you through the process:

- Identify the standard against which the instrument will be calibrated.

- Compare the instrument's output with the standard.

- Adjust the instrument to minimize any discrepancies.

- Document the calibration process and results for future reference.

It's essential to recognize that different instruments may require unique calibration methods. For instance, a scale used for measuring weight will be calibrated differently than a thermometer used for temperature. Below is an example of how calibration data might be recorded in a table format:

| Instrument | Standard Used | Pre-Calibration Reading | Post-Calibration Adjustment | Date of Calibration |

|---|---|---|---|---|

| Scale | 1 kg Weight | 1.02 kg | -0.02 kg | 2023-04-15 |

| Thermometer | 0°C Ice Bath | 0.5°C | -0.5°C | 2023-04-15 |

Remember, the goal of calibration is not just to adjust the instrument but to understand its behavior and limitations. This understanding is crucial for interpreting the data accurately and ensuring that your research findings are robust and reliable.

Quantifying Variables: From Theory to Practice

Translating theoretical constructs into measurable variables.

Operationalizing a variable is the cornerstone of empirical research, transforming abstract concepts into quantifiable measures. Your ability to effectively operationalize variables is crucial for testing hypotheses and advancing knowledge within your field. Begin by identifying the key constructs of your study and consider how they can be observed in the real world.

For instance, if your research involves the construct of 'anxiety,' you must decide on a method to measure it. Will you use a self-reported questionnaire, physiological indicators, or a combination of both? Each method has implications for the type of data you will collect and how you will interpret it. Below is an example of how you might structure this information:

- Construct: Anxiety

- Measurement Method: Self-reported questionnaire

- Instrument: Beck Anxiety Inventory

- Scale: 0 (no anxiety) to 63 (severe anxiety)

Once you have chosen an appropriate measurement method, ensure that it aligns with your research objectives and provides valid and reliable data. This process may involve adapting existing instruments or developing new ones to suit the specific needs of your study. Remember, the operationalization of your variables sets the stage for the empirical testing of your theoretical framework.

Assigning Units and Scales of Measurement

Once you have translated your theoretical constructs into measurable variables, the next critical step is to assign appropriate units and scales of measurement. Units are the standards used to quantify the value of your variables, ensuring consistency and robustness in your data. For instance, if you are measuring time spent on a task, your unit might be minutes or seconds.

Variables can be categorized into types such as continuous, ordinal, nominal, binary, or count. This classification aids in selecting the right scale of measurement and is crucial for the subsequent statistical analysis. For example, a continuous variable like height would be measured in units such as centimeters or inches, while an ordinal variable like satisfaction level might be measured on a Likert scale ranging from 'Very Dissatisfied' to 'Very Satisfied'.

Here is a simple table illustrating different variable types and their potential units or scales:

| Variable Type | Example | Unit/Scale |

|---|---|---|

| Continuous | Height | Centimeters (cm) |

| Ordinal | Satisfaction Level | Likert Scale (1-5) |

| Nominal | Blood Type | A, B, AB, O |

| Binary | Gender | Male (1), Female (0) |

| Count | Number of Visits | Count (number of visits) |

Remember, the choice of units and scales will directly impact the validity of your research findings. It is essential to align them with your research objectives and the nature of the data you intend to collect.

Handling Qualitative Data in Quantitative Analysis

When you embark on the journey of operationalizing variables, you may encounter the challenge of incorporating qualitative data into a quantitative framework. Operationalization is the process of translating abstract concepts into measurable variables in research, which is crucial for ensuring the study's validity and reliability. However, qualitative data, with its rich, descriptive nature, does not lend itself easily to numerical representation.

To effectively handle qualitative data, you must first systematically categorize the information. This can be done through coding, where themes, patterns, and categories are identified. Once coded, these qualitative elements can be quantified. For example, the frequency of certain themes can be counted, or the presence of specific categories can be used as binary variables (0 for absence, 1 for presence).

Consider the following table that illustrates a simple coding scheme for qualitative responses:

| Theme | Code | Frequency |

|---|---|---|

| Satisfaction | 1 | 45 |

| Improvement Needed | 2 | 30 |

| No Opinion | 3 | 25 |

This table represents a basic way to transform qualitative feedback into quantifiable data, which can then be analyzed using statistical methods. It is essential to ensure that the coding process is consistent and that the interpretation of qualitative data remains faithful to the original context. By doing so, you can enrich your quantitative analysis with the depth that qualitative insights provide, while maintaining the rigor of a quantitative approach.

Designing the Experimental Framework

Creating a structured causal model (scm).

In your research, constructing a Structured Causal Model (SCM) is a pivotal step that translates your theoretical understanding into a practical framework. SCMs articulate the causal relationships between variables through a set of equations or functions, allowing you to make clear and testable hypotheses about the phenomena under study. By defining these relationships explicitly, SCMs facilitate the prediction and manipulation of outcomes in a controlled experimental setting.

When developing an SCM, consider the following steps:

- Identify the key variables and their hypothesized causal connections.

- Choose the appropriate mathematical representation for each relationship (e.g., linear, logistic).

- Determine the directionality of the causal effects.

- Specify any interaction terms or non-linear dynamics that may be present.

- Validate the SCM by ensuring it aligns with existing theoretical and empirical evidence.

Remember, the SCM is not merely a statistical tool; it embodies your hypotheses about the causal structure of your research question. As such, it should be grounded in theory and prior research, while also being amenable to empirical testing. The SCM approach circumvents the need to search for causal structures post hoc, as it requires you to specify the causal framework a priori, thus avoiding common pitfalls such as 'bad controls' and ensuring that exogenous variation is properly accounted for.

Determining the Directionality of Variables

In the process of operationalizing variables, understanding the directionality is crucial. Directed acyclic graphs (DAGs) serve as a fundamental tool in delineating causal relationships between variables. The direction of the arrow in a DAG explicitly indicates the causal flow, which is essential for constructing a valid Structural Causal Model (SCM).

When you classify variables, you must consider their types —continuous, ordinal, nominal, binary, or count. This classification not only aids in understanding the variables' nature but also in selecting the appropriate statistical methods for analysis. Here is a simple representation of variable types and their characteristics:

| Variable Type | Description |

|---|---|